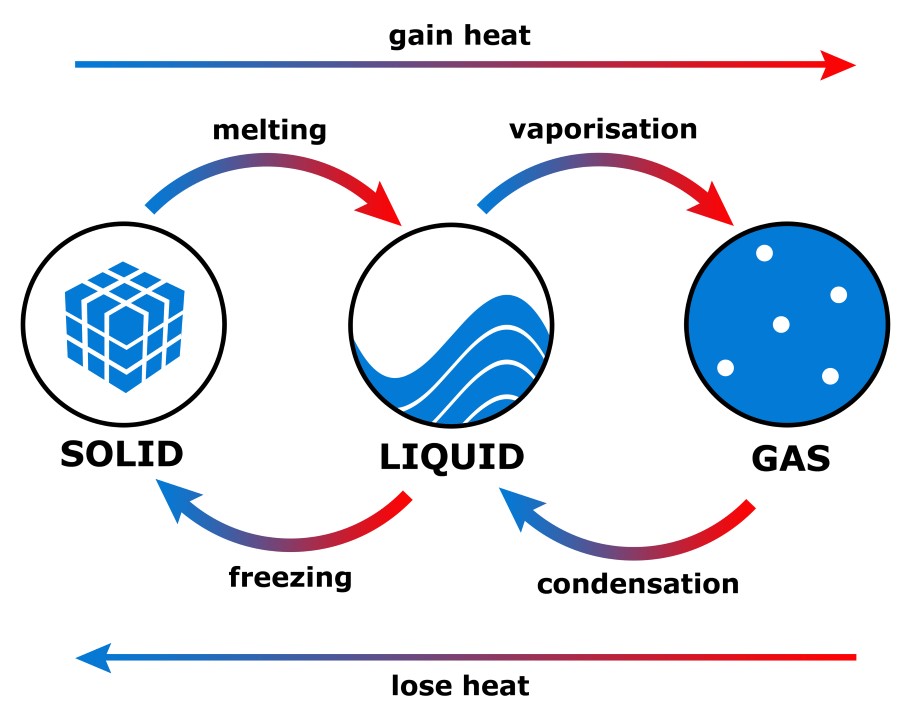

(6.11) and (6.12) that for an isothermal heat process at constant volume, ASdim = Q/r, so if ASdim = 1, then Q = r. Thus, for this simple model of two-state independent particles, r is not a good indicator of the average energy per particle.ĭimensionless entropy and r. Figure 6.7 shows that E/N < 0.28r for all values of r. Thus, N ex/N -> 0.5, E/N = N exe/N ->■ 0.5c, and the energy per particle (E/N)/t -> 0 for r -> oo. That is, the number of excited st ates with energy e approaches 1/2. For high temperatures, i.e., with т/е > 1, the fraction of particles with energy e approaches 1/2. Next, consider a system with N independent, two-state particles, each with possible energies 0 and e > 0. Tempergy itself is not a good measure of the internal energy per particle for an ideal gas of bosons below the critical temperature. Thus (E/N)/t is not constant, but rather is proportional to r 3/. (4.6) and (4.7), the energy per particle is

A helpful result from statistical mechanics is that below the critical temperature, the internal energy per excited particle of an ideal Bose-Einstein gas is E/N ex = 0.77r. It turns out that the dimensionless entropies per particle of monatomic solids are typically A 3 with A 3 = (h 2 /(2тт'ткТ )) 3/ 2. which is consistent with о approaching zero for T (and t) -> 0. At T = 1 K, solid silver has о = 8.5 x 10“ ’. Similarly, one finds that diamond has a = 57.79. (6.13) implies the dimensionless entropy per particle, er = 50.68. What are typical values of o, the dimensionless entropy per molecule? Consider graphite, with molar entropy S mo = 5.7.1 K _1 mol -1 at standard temperature and pressure.

Numericsĭimensionless entropy per molecule. Key Point 6.9 If temperature had been defined historically as an energy, entropy would have been dimensionless by definition and we might never have encountered the Kelvin temperature scale or Boltzmann’s constant. Tempergy is intensive, has energy units, and is not related to a stored system energy in general, as I discuss below. Internal energy is extensive and represents a stored energy. However they are very different entities physically. The kelvin can be viewed as an energy, i.e., 1 K=l.3807310223 J, and tempergy and internal energy have the same units. The universal gas constant R = 8.3145 J mol 1. Going one step further, define o, the dimensionless entropy per particle, The dimensionless entropy satisfies the property, I prefer to work with the number of molecules N rather than the number of moles. П = N/N a, and N a is Avogadro’s number, is tabulated. Instead, the entropy per mole, 5 moi = S/n, where The entropy 5 is proportional to the system’s number of particles N so it cannot be tabulated numerically in handbooks or databases. (3.3), S(E) = к In Cl(E), the system has internal energy E consistent with its temperature and pressure. Shannon cites Hartley in the opening paragraph of his famous paper A Mathematical Theory of Communication.In Eq. Hartley published a paper in 1928 defining what we now call Shannon entropy using logarithms base 10. The unit “hartley” is named after Ralph Hartley. So binary logs give bits, natural logs give nats, and decimal logs give dits.īits are sometimes called “shannons” and dits were sometimes called “bans” or “hartleys.” The codebreakers at Bletchley Park during WWII used bans. And when the logs are taken base 10, the result is in units of dits. When logs are taken base e, the result is in units of nats. These days entropy is almost always measured in units of bits, i.e. Which looks better, except it contains the unfamiliar colog. If we write the same definition in terms of cologarithms, we have But you’re taking the logs of numbers less than 1, so the logs are negative, and the negative sign outside the sum makes everything positive. The Shannon entropy of a random variable with N possible values, each with probability p i, is defined to beĪt first glace this looks wrong, as if entropy is negative. There’s one place where I would be tempted to use the colog notation, and that’s when speaking of Shannon entropy. I suppose people spoke of cologarithms more often when they did calculations with logarithm tables. The cologarithm base b is the logarithm base 1/ b, or equivalently, the negative of the logarithm base b. Here’s a plot of the frequency of the terms cololgarithm and colog from Google’s Ngram Viewer. The term “cologarithm” was once commonly used but now has faded from memory.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed